I love technology. I’ve spent most of my career writing about it and I don’t want to do anything else. But there’s a negative tone that runs through my recent work. Why is that?

Because a lot of what is referred to as “tech” in our current age is better described as “business”, and business isn’t cool or fun. The companies that call themselves tech companies know this, which is why they call themselves tech companies even if that doesn’t make sense.

You probably would never think of a taxi company or a hotel chain as cool, for example, particularly if they did things like exploit their workers or drive up housing costs. Airbnb and Uber, though, were long seen as innovative tech companies, despite doing exactly that. Calling yourself a tech company gave you a lot of cover in that era.

I started writing about tech for a mainstream audience in the early 2010s, when the tech press was best understood as an enthusiast press. We thought the things we were writing about were neat, and to be fair, a lot of it was. But our enthusiasm for shiny new things made us blind to the economics of the underlying businesses. A huge wakeup, for a lot of us, came in 2016, when social networks obsessed with increasing engagement skewed the culture away from nuance and toward conflict so profoundly that a racist gameshow host managed to troll his way to the presidency of the United States. It’s hard to see technology as a neutral force in the world after that. A lot of people working in technology had to question their value system after that.

Which brings me to AI. I know: I talk about this a lot lately. I think part of that is I can’t make the tech enthusiast part of my brain be excited about it. I have tried, on a regular basis, to think of some way to use this technology in my day to day. I am extremely motivated on this: my job is to find new technology and tell people how to get the most out of it. And I found a couple uses, but to be honest, all this time later I still don’t use it on a regular basis.

Now, this might be because I’m already a pretty good writer—the system is much better at making bad writing passable than it is at making passable writing good (let alone making good writing great). There’s a reason why “AI-generated” is already online slang for something that is inauthentically or poorly written.

But even if the system was great at making exceptional writing, I wouldn’t love it, because it feels more like a business strategy than an actual product. When I listen to Microsoft, Apple, or OpenAI executives talk about it, it always feel like they have put way more thought into leveraging the tech to build or protect a monopoly than they have thinking about what the thing is actually for. Think about it: what use cases are the companies pushing AI actually presenting?

A standard use is “writing emails”, which I find laughable. Honestly, if you’re emailing me and thinking about using AI to write it, save us both some time and just send me the prompts you were going to give the chatbot. By definition all of the relevant information is there.

Outside of vague uses like that—and the possible exception of writing code, which most people don’t do—I’m hearing next to nothing about actual uses for everyday people and a lot of bloviating about “the future”. None of this is to say that their predictions won’t come true—I have no idea—but the language makes it clear they care a lot more about winning that future than they do about what the future will actually be.

And I think that’s why talk of AI bums me out. AI isn’t a solution looking for a problem—it’s a market strategy made by people who aren’t even thinking about problems. Tech companies want to make sure they own the future, even when they’re not sure what the future is going to be. It’s so, so boring, and it’s making me very sleepy.

AI ripped me off (and gave me killer abs)

…or maybe I just hate AI because it’s destroying the business I work for. For those who don’t know, there’s a bunch of websites that are scraping articles from legitimate news sites, using AI to re-write them, then publishing articles en masse. This walks right up to the line of copyright infringement and spits over it into the faces of people who actually create things. The average site like this publishes hundreds of articles a day.

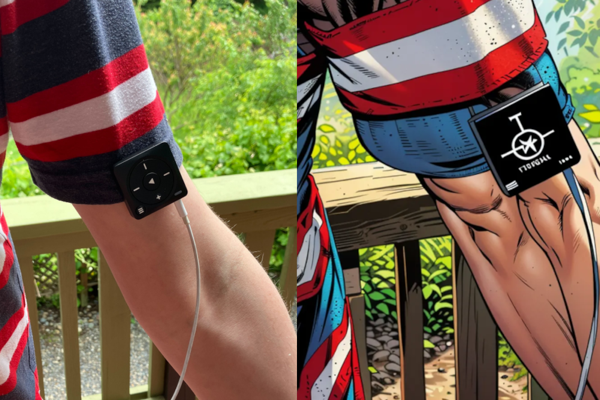

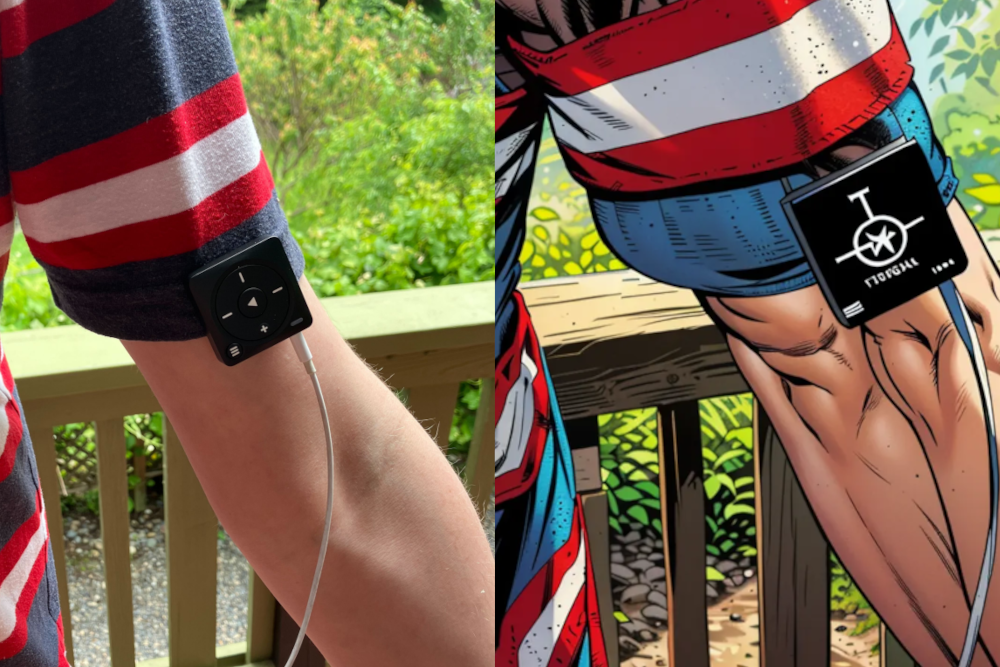

I bring this up because one such site took my review of the Mighty 3, which is a sort of iPod shuffle for Spotify users, and not only re-worded it but also re-did the images. Here’s a before and after:

You’ll notice that the device I’m reviewing is completely unrecognizable, making the image useless. You’ll also notice that I’m freaking ripped. I checked: they muscle up every single photo to this extent, I assume because people click such things more often? Hilarious whatever the reason. What a great illustration of how far gone the internet is.

How to Quit Google, According to a Privacy Expert

Some companies are easy to quit. If I decide I don’t like Coca-Cola anymore I can simply stop drinking Coke. Sure, the company makes more than just Coke, so I would need to do some research to figure out which products they do and don’t make, but it’s theoretically possible.

Quitting Google isn’t like that. It makes many products, many of which you depend on to live your digital life. Leaving a company like that is like a divorce, according to an expert I talked to. “It’s not easy, but you feel so much better at the other side,” said Janet Vertesi, a sociology professor at Princeton who publishes work on human computer interaction. “Think of a friend who gets a divorce and is so happy to be out. That could be you. That’s how it feels to leave Google.”

More stuff I wrote

- The Best Google Search Alternatives for Most People Lifehacker Just in case you’re looking for an alternative.

- Is the Y cut the best way to slice a sandwich?PopSci Stopped writing about tech for a while so I could focus on something important.

- Ventoy Is a Better Way to Make a Bootable Disk for PC and Linux Lifehacker This is the real nerd shit.

- Use ‘Bridgy Fed’ to Connect Mastodon and Bluesky Lifehacker No, wait, this is.

@JustinPotBlog I’m playing around with putting my whole newsletter on the website—let me know if it works here.

@jhpot @JustinPotBlog I can see the whole thing, but I was confused at first that the image that was referenced in the middle appears at the bottom.

@cgervasi @JustinPotBlog This seems to be a quirk of pushing blog posts out to the Fediverse—images don’t show up in place. I might have to just, like, not reference images in the text of a post. Still feeling this out. You can see how it’s formatted on my website here: https://justinpot.com/tech-is-cool-business-is-boring/

@JustinPotBlog I think you're right. Even in coding, AI doesn't raise the ceiling but raises the floor. A passable developer doesn’t need it, it’s just an accelerator. It helps bad developers write boilerplate code faster but it can also hide autogenerated bugs in passable developers’ code. Also it hallucinates some weird code. Cory Doctorow describes AI as a "reverse centaur": instead of a human brain with a strong horse body, you get a weak human body with a horse brain.

@tatonka @JustinPotBlog Reverse centaur is such a great analogy

@jhpot @JustinPotBlog it blew my mind. Apparently it’s a term in automation circles. Here’s the most recent article he wrote on it, it’s eye-opening: https://doctorow.medium.com/https-pluralistic-net-2024-04-01-human-in-the-loop-monkey-in-the-middle-14e72bd46b7a